We talked how to track database changes with one auditing table last month. If we want to replicate these changes into another database, then we might use multi-threading to gain the high performance in data ingestion. However, based on our previous design, we track changes to column level, which generates a serious concurrency control issue. Let’s think about, we have a data table,  with 10 columns updates at once, then all these changes are captured and inserted into my_audit_table. If at the same time, another table gets updated, then there might have some cross inserting in my_audit_table, which makes the multi-threading read even harder.

with 10 columns updates at once, then all these changes are captured and inserted into my_audit_table. If at the same time, another table gets updated, then there might have some cross inserting in my_audit_table, which makes the multi-threading read even harder.

Is there a better approach that we should go? In this case, it’s worth to re-design the audit_table. Let’s see how it looks like (in Oracle PL/SQL):

create table new_audit_table ( audit_id number not null, table_name varchar2(50), primary_key varchar2(300), -- concatenated pk columns with pre-defined delimiter primary_value varchar2(4000), -- concatenated pk column values with pre-defined delimiter action varchar2(10), -- INSERT, UPDATE, DELETE status varchar2(20), modified_by varchar2(100), modified_on date, modified_using varchar2(30), constraint new_audit_table_pk primary key (audit_id) );

Comparing previous design, we dropped column_name, old_value and new_value columns. The purpose is to avoid concurrency conflicts. We watch out table_name, compound primary_key and compound primary_value, which may determine which row gets updated, so we are able to capture row-level changes. Performance wise, it might not be worse than handling column-by-column changes, although we didn’t calculate it in detail.

Now let’s see how we handle the compound primary keys – for tables with more than one primary key.

IF (li_rowcount > 0) THEN ls_sql := 'select ucc.column_name from user_constraints uc, user_cons_columns ucc where uc.table_name = '''||upper(ps_table_name)||''' and uc.constraint_type = ''P'' and uc.constraint_name = ucc.constraint_name order by position'; OPEN pk_cursor FOR ls_sql; FOR i IN 1..li_rowcount LOOP FETCH pk_cursor INTO ls_pkstring; ls_primary_keys := ls_primary_keys || ',' || ls_pkstring; END LOOP; CLOSE pk_cursor; END IF; ls_primary_keys := substr(ls_primary_keys, 2, length(ls_primary_keys));

The last line outputs a concatenated string like: pk_colname1,pk_colname2,pk_colname3.

We may use similar approach to handle primary column values and store in new_audit_table through trigger.

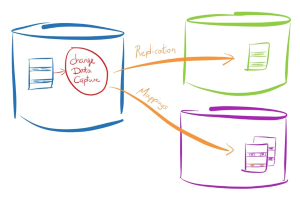

In this way, we may capture the entire table changes. As long as a row data changes, we know which its compound primary key and values, so we may capture it in new_audit_table. When we trace back, we use the action and status column to get status and trace back to original table from which we may get data for replication operation.

Should you have any questions in database design or database synchronization, please feel free to contact us. Stay tuned for our upcoming high performance enterprise database synchronization platform – LiveSync Automation.